|

|

|

|

|

|

|

|

|

|

|

|

Download Dataset |

Video |

Visualization |

GitHub Repo |

Related Work |

|

|

|

|

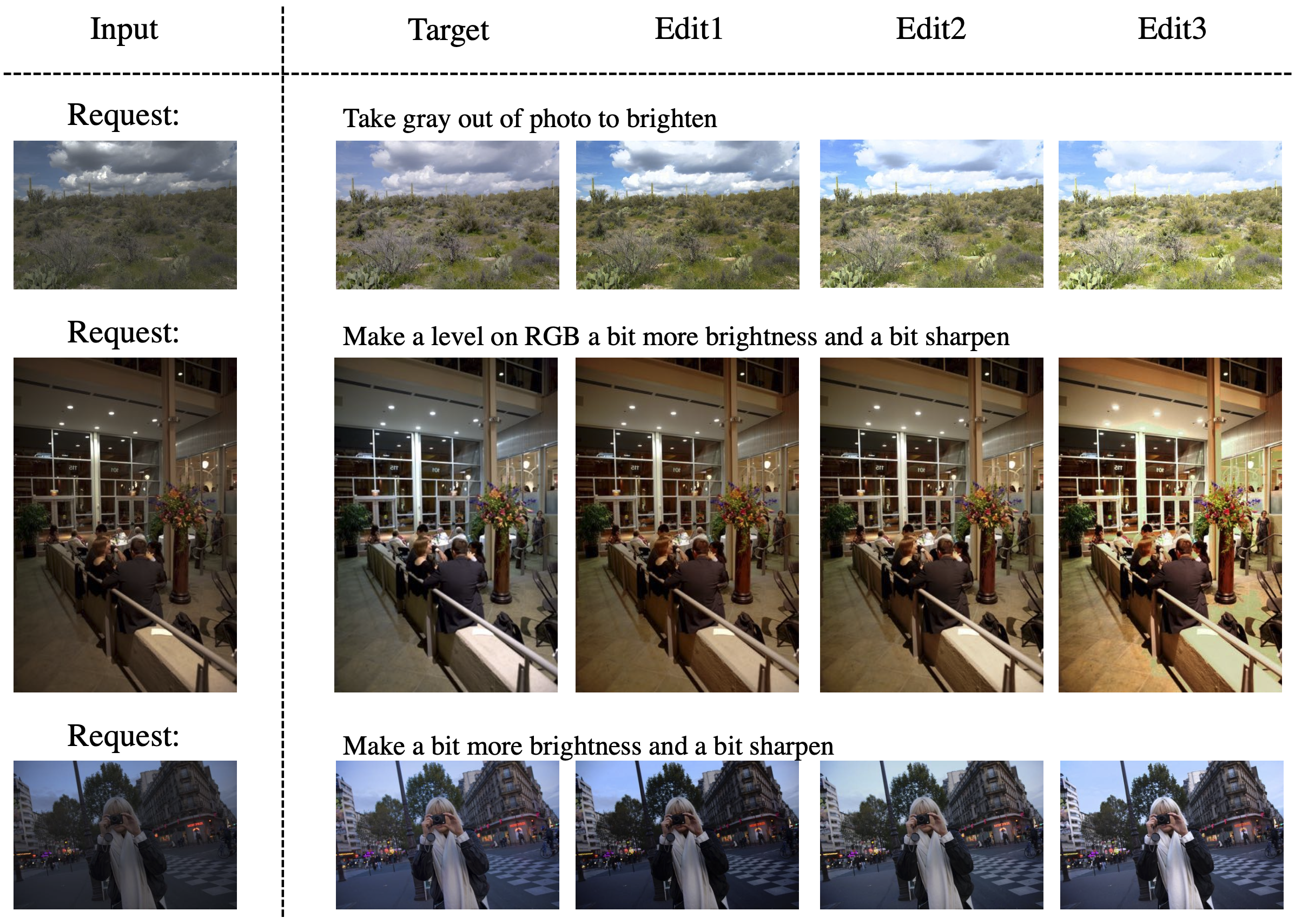

| Visualization for diversified output given the same input and request by sampling the operation parameter at inference stage. |

|

Check its README to see the data structure. |

|

Jing Shi, Ning Xu, Yihang Xu, Trung Bui, Franck Dernoncourt, Chenliang Xu Learning by Planning: Language-Guided Global Image Editing In CVPR, 2021. (ArXiv) |

Acknowledgements |

Contact |